There is no right size for any given experiment. Power calculations become part of the iterative dialogue that leads to the eventual compromise study design, and consideration of the uncertainty of the assumptions and their impact contributes to understanding the robustness of the design. Any study design is a compromise between gaining information and practical constraints of time, availability of participants or samples and funding. The parameters required to estimate study power are rarely known with great precision. Whilst power calculations can be helpful, they are not a panacea. The study needs to be large enough to have an acceptable chance of answering the research question, but not larger than necessary.įor studies with explicit pre-specified hypotheses it is in principal possible to estimate the probability that a study of a given size will answer the question - the power of the study - and many reviewers, funders and ethics committees ask for such calculations. If both d and OR are specified, results will only be computed for the value of d.Choosing an appropriate size for an experimental study is one major component in the design of any research study.

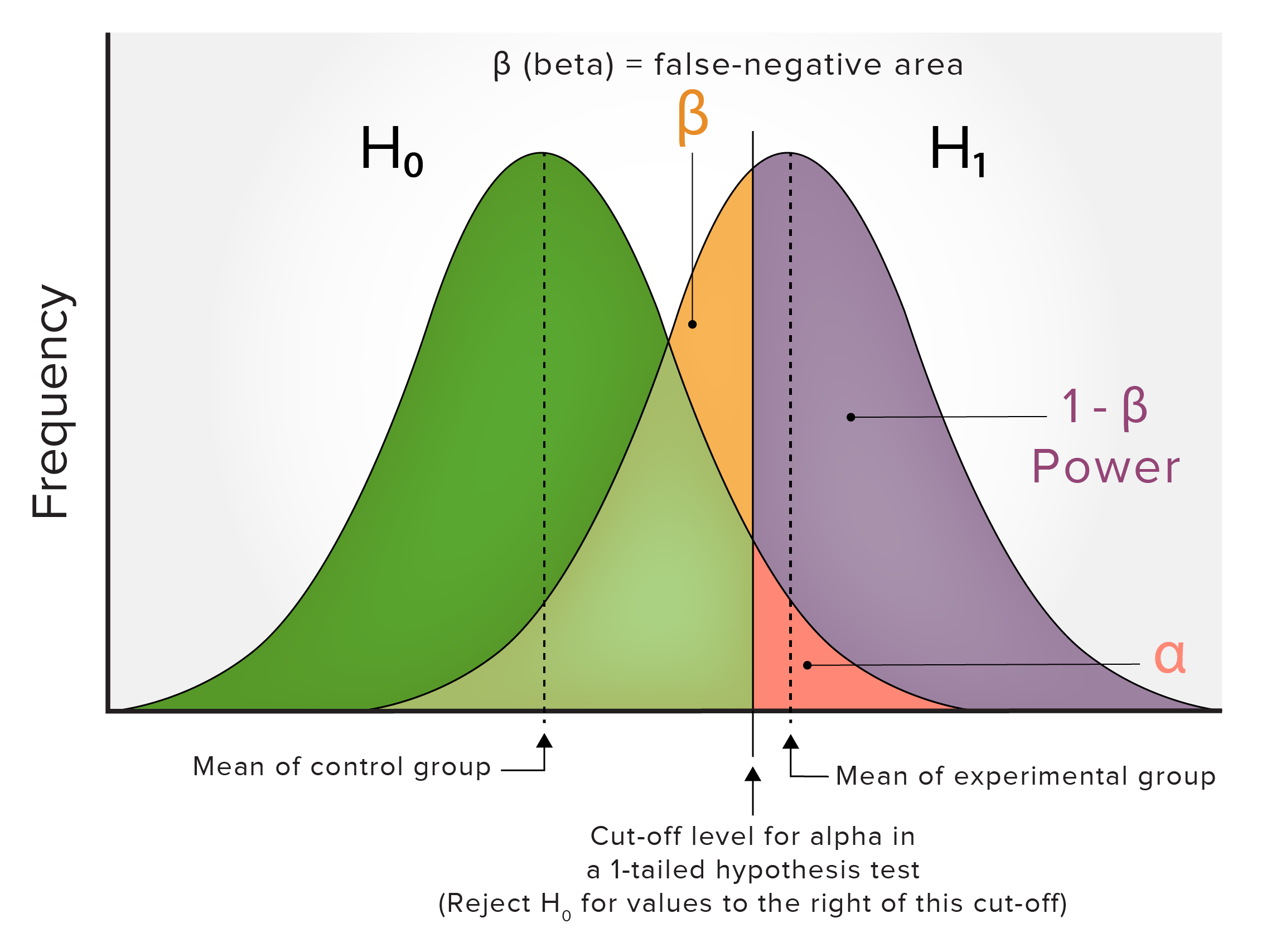

The assumed effect of a treatment or intervention compared to control, expressed as an odds ratio (OR). Effect sizes must be positive numeric values. The hypothesized, or plausible overall effect size, expressed as the standardized mean difference (SMD). The power.analysis function contains these arguments:ĭ. The statistical power can be defined as the probability that the test will detect an effect (i.e. a mean difference), if it exists: Suppose that our null hypothesis states that there is no difference between the means of two groups, while the alternative hypothesis postulates that a difference (i.e. an “effect”) exists. The power of a test directly depends on \(\beta\). This generates a false negative, known as a Type II or \(\beta\) error. Conversely, it is also possible that we accept the null hypothesis, while the alternative hypothesis is true. This leads to a false positive, also known as a Type I or \(\alpha\) error. The first error is to accept the alternative hypothesis (e.g. It is directly related to the two types of errors that can occur in a hypothesis test. The idea behind statistical power is derived from classical hypothesis testing. We already touched on the concept of statistical power in Chapter 9.2.2.2, where we learned about the p-curve method. This also reduces the overall precision and thus the statistical power. Furthermore, many meta-analyses show high between-study heterogeneity. This becomes even more problematic if we factor in that meta-analyses often include subgroup analyses and meta-regression, for which even more power is required. The median number of studies in Cochrane systematic reviews, for example, is six ( Borenstein et al. The number of included studies in many meta-analyses is small, often below \(K=\) 10. Lack of statistical power, however, may still play an important role–even in meta-analysis.

While primary studies may not be able to ascertain the significance of a small effect, meta-analytic estimates can often provide the statistical power needed to verify that such a small effect exists. This is particularly useful when the true effect is small. In most cases, meta-analyses produce estimates with narrower confidence intervals than any of the included studies. Ne of the reasons why meta-analysis can be so helpful is because it allows us to combine several imprecise findings into a more precise one.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed